M3 Blog

Everything you wanted to know about mantis breeding…

posted by Jenny on April 3, 2019Check out our guide to mantis breeding, by top insect technician Adam Simmons.

A Novel Form of Stereo Vision in the Praying Mantis

posted by Jenny on February 17, 2018Our latest paper on mantis stereopsis has just come out in Current Biology. Briefly, we find that mantis stereopsis operates very differently from humans’: it is based on temporal change, and does not require the images to be correlated.

We made a video abstract to explain the paper’s key findings and significance:

Featured in National Geographic documentary

posted by Jenny on February 24, 2016National Geographic featured our work on mantis 3D vision in their recent documentary “Explorer: Eyes Wide Open”. Here’s a clip:

Demo videos from our mantis 3D glasses paper

posted by Jenny on January 20, 2016We uploaded 6 nice demo videos as Supplementary Material for our Scientific Reports paper. Unfortunately the links are currently broken (I have emailed) and in any case they are provided in a slightly clunky way where you have to download them. So I thought I would do a blog post explaining the videos here.

Here is a video of a mantis shown a 2D “bug” stimulus (zero disparity). A black disk spirals in towards the centre of the screen. Because the disk is black, it is visible as a dark disk in both eyes, i.e. it’s an ordinary 2D stimulus. The mantis therefore sees it, correctly, in the screen plane, 10cm in front of the insect. The mantis knows its fore-arms can’t reach that far, so it doesn’t bother to strike.

Next, here’s a video of a mantis shown the same bug stimulus in 3D. Now the disk is shown in blue for the left eye and green for the right (bearing in mind that the mantis is upside down). Because the mantis’s left eye is covered by a green filter, the green disk is invisible to it – it’s just bright on a bright background, i.e. effectively not there, whereas the blue disk appears dark on a bright background.

This is a “crossed” geometry, i.e. lines of sight from each disk to the eye that can see it cross over in front of the screen, at a distance about 2.5cm in front of the insect. This is well within the mantis’s catch range, so the insect strikes out trying to catch the bug. You can sometimes see children doing the same thing when they see 3D TV for the first time!

Here’s a slo-mo version recorded with our high-speed camera. Unfortunately the quality has taken a big hit, but at least you get to see the details of the strike…

Sceptical minds (the best kind) might wonder if this is the correct explanation. What if the filters don’t work properly and there’s lots of crosstalk? Then, the mantis is seeing a single dark disk in our “2D” condition and two dimmer disks in our “3D” condition. Maybe the two disks are the reason it strikes, nothing to do with the 3D. Or maybe there’s some other artefact. As a control, we swapped the green and blue disks over, effectively swapping the left and right eye’s images. Now the lines of sight don’t intersect at all, i.e. this image is not consistent with a single object anywhere in space. Sure enough, the mantis doesn’t strike. Obviously, in different insects we put the blue/green glasses on different eyes, so we could be sure the difference really was due to the binocular geometry, not the colours or similar confounds.

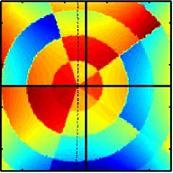

Here’s the figure from our paper which illustrates this geometry and also shows the results:

More insects in specs

posted by Jenny on January 19, 2016Thanks to AP TV for making this nice video about our mantis 3D glasses, which appeared in the Telegraph.

Crosstalk with insect 3D glasses

posted by Jenny on January 9, 2016Our first paper on praying mantis 3D vision has just come out in Scientific Reports: Insect stereopsis demonstrated using a 3D insect cinema by Nityananda, Tarawneh, Rosner, Nicolas, Crichton, Read. There’s a press release here.

One issue we discuss in the paper is https://www.yahoo.com/tech/scientists-gave-praying-mantises-tiny-142122820.html>the problem of crosstalk. This was why we ended up using our anaglyph (green/blue) 3D glasses after having initially explored circularly-polarising glasses.

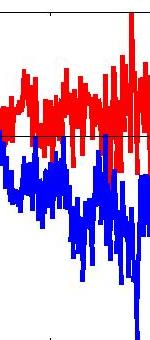

Here are two videos we prepared to illustrate the crosstalk experienced with the two systems.

The first video shows how bad the crosstalk is with a patterned-retarder display, which separates the images by circular polarisation. Crosstalk is very viewing-angle dependent in these displays, and we think that because the mantises are so close to the screen, they are sometimes seeing the target at very oblique angles, and this is why they get so much crosstalk.

In contrast, anaglyph glasses, which separate the images by spectral wavelength, show very low crosstalk regardless of viewing angle. We think this is why they work so much better, as we demonstrate in the paper.

Press coverage of M3 project

posted by Jenny on April 25, 2014Vivek’s amazing 3D glasses have been gaining some coverage in the media:

“The smallest spectacles in the world: Scientists craft 3D glasses for a PRAYING MANTIS to better understand sight” Daily Mail

“3D Glasses For Praying Mantis Are World’s Smallest” – Huffington Post

“Praying mantis gets pair of 3D glasses… watches own version of The Fly” – Metro

“Scientists Make 3D Glasses for Praying Mantises” — Science, Space and Robots

“Testing 3D vision in praying mantises” — Newcastle University

Mantis under Infrared

posted by Ghaith on February 7, 2014This week we acquired an infrared camera and realized that mantids glow like light bulbs under infrared!

Below are two shots for a mantis using a conventional (left) and our new infrared (right) cameras.

Apart from producing very cool mantis photography, this camera has the added advantage of providing a clear outline of the subject mantis in each trial. This is very useful as a first step towards building video processing algorithms which we intend to use as a replacement for human observers in mantis experiments.

Mantis in motion

posted by Jenny on October 8, 2013Things are going well with the mantids. They are such great experimental subjects; I think I prefer them to humans! Certainly a lot less hassle :).

Although the main thrust of the M3 project will be about their stereo vision, we are getting increasingly excited about the great questions we can ask about their motion perception. Lisa is currently collecting data on a motion perception question, while Vivek is progressing the main stereo research arc by constructing ever more refined 3D glasses for the mantids. Ghaith is working on stimulus generation for both projects and starting to develop models of the underlying algorithms. Exciting times.

Welcome Ronny!

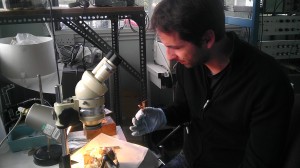

posted by Jenny on April 26, 2013 And I’m delighted to announce that Ronny Rosner is the third and final member of the M3 team. Ronny already has considerable experience studying neuronal processing by both behavioural and electrophysiological approaches in flies and locusts, including both extracellular and intracellular recordings. He’s currently in Uwe Homberg’s lab in Marburg, Germany. Ronny won’t be joining us till January 2014, though he will be visiting before then. He’s already spent a week visiting Newcastle, during which I took this photo of him in Claire Rind’s lab, about to dissect a mantis brain for us. Germany has a particularly strong tradition of insect neuroscience, so I’m delighted Ronny will be bringing that over to Newcastle to fuse with our existing insect and wider neurophysiology community. In fact I am really excited that I’ve managed to recruit such a talented team for the M3 project, and am looking forward eagerly to 5 years of fun science and productivity!

And I’m delighted to announce that Ronny Rosner is the third and final member of the M3 team. Ronny already has considerable experience studying neuronal processing by both behavioural and electrophysiological approaches in flies and locusts, including both extracellular and intracellular recordings. He’s currently in Uwe Homberg’s lab in Marburg, Germany. Ronny won’t be joining us till January 2014, though he will be visiting before then. He’s already spent a week visiting Newcastle, during which I took this photo of him in Claire Rind’s lab, about to dissect a mantis brain for us. Germany has a particularly strong tradition of insect neuroscience, so I’m delighted Ronny will be bringing that over to Newcastle to fuse with our existing insect and wider neurophysiology community. In fact I am really excited that I’ve managed to recruit such a talented team for the M3 project, and am looking forward eagerly to 5 years of fun science and productivity!

← Older posts